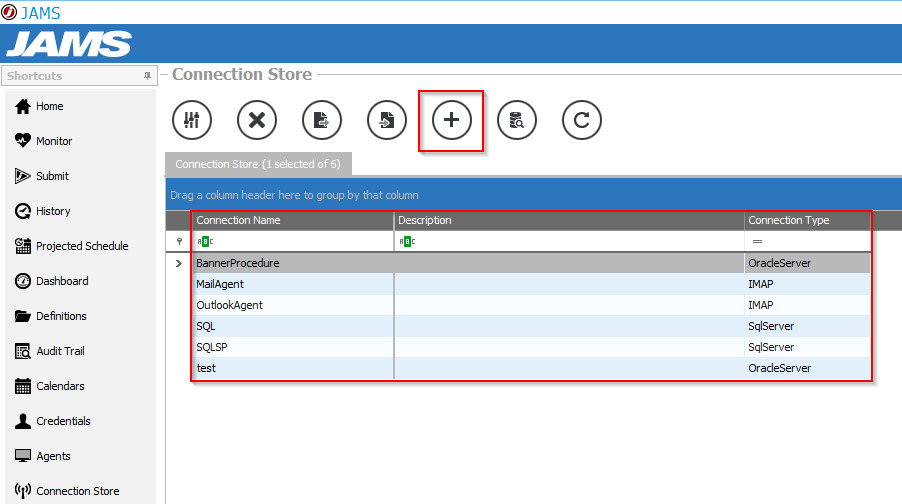

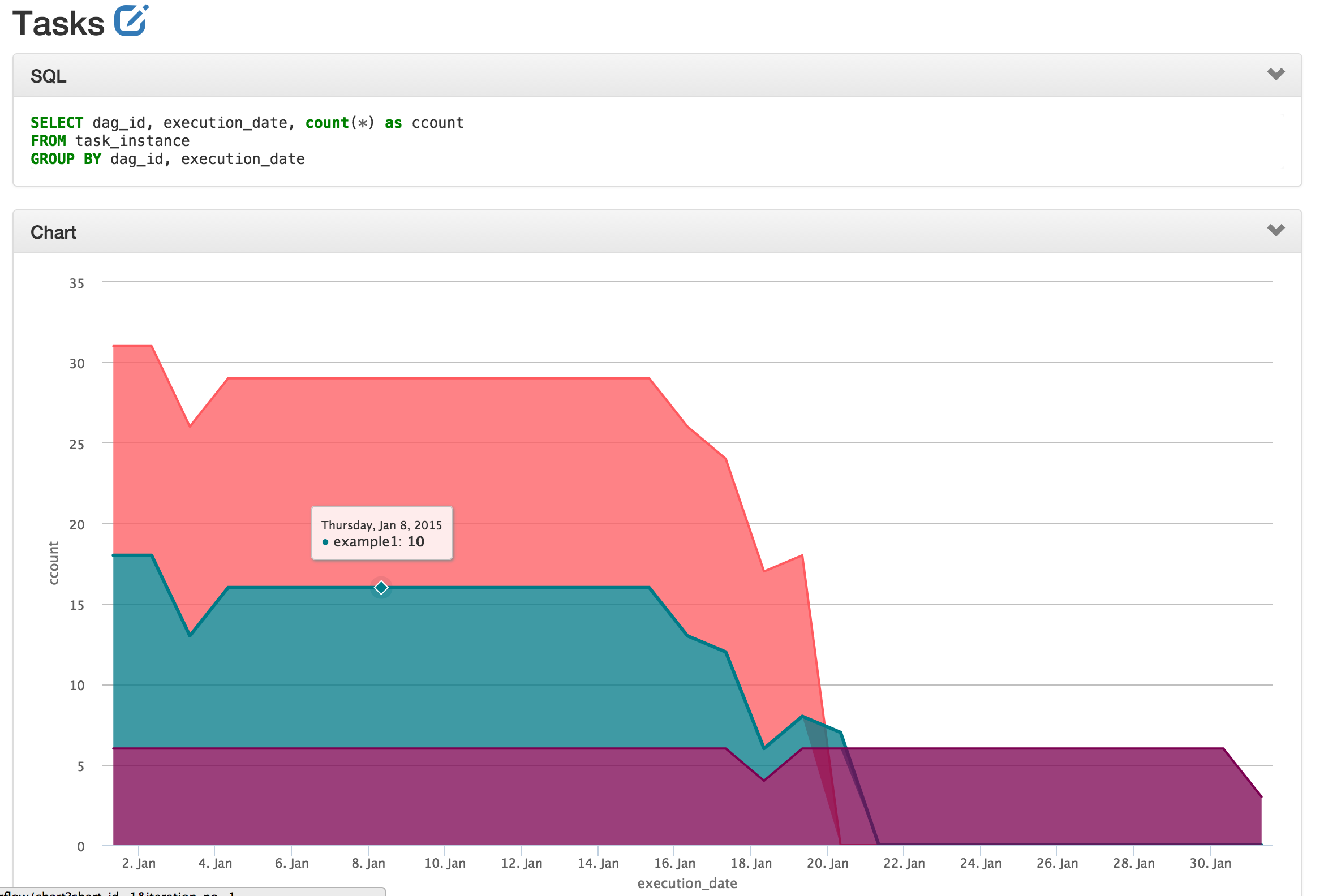

Is_correct integer := (SELECT relispopulated FROM temporary_table_to_be_used WHERE views LIKE '%>%') ĮXECUTE 'REFRESH MATERIALIZED VIEW ' || view_to_refresh || ' WITH DATA ' ĮXECUTE 'REFRESH MATERIALIZED VIEW CONCURRENTLY ' || view_to_refresh || ' WITH DATA ' ĭrop_exist_temporary_view = PostgresOperator(Ĭreate_temporary_view = PostgresOperator(ĭrop_exist_temporary_view > create_temporary_view > use_temporary_viewĪt the end of execution, I receive the following message: INFO - Subtask: psycopg2.ProgrammingError: relation "temporary_table_to_be_used" does not exist ,CASE WHEN relispopulated = 'true' THEN 1 ELSE 0 END AS relispopulated To emulate a simple example, I use this code (do not run, just see the objects): import osįrom _operator import PostgresOperatorĭrop_exist_temporary_view = "DROP TABLE IF EXISTS temporary_table_to_be_used "ĬREATE TEMPORARY TABLE temporary_table_to_be_used AS In other words: We lose all temporary objects of the previous component of the DAG. Using the Postgres connection in Airflow and the "PostgresOperator" the behaviour that I found was: For each execution of a PostgresOperator we have a new connection (or session, you name it) in the database. INTO default_glue_catalog.database_a137bd.I'm using Airflow for some ETL things and in some stages, I would like to use temporary tables (mostly to keep the code and data objects self-contained and to avoid to use a lot of metadata tables). INTO default_glue_catalog.database_a137bd.orders_raw_data ĬREATE SYNC JOB load_sales_info_raw_data_from_s3 Create streaming jobs to ingest raw orders and sales data into the staging tables.ĬREATE SYNC JOB load_orders_raw_data_from_s3

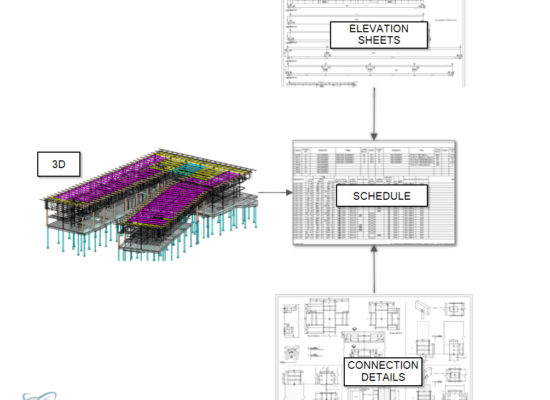

Create empty tables to use as staging for orders.ĬREATE TABLE default_glue_catalog.database_a137bd.orders_raw_data()ĬREATE TABLE default_glue_catalog.database_a137bd.sales_info_raw_data() Run the following code in SQLake /* Ingest data */ĬREATE S3 CONNECTION airflow_alternative_pipelines_samplesĪWS_ROLE = 'arn:aws:iam::949275490180:role/samples_role'ĮXTERNAL_ID = 'AIRFLOW_ALTERNATIVE_SAMPLES' Here is a code example of joining multiple S3 data sources into SQLake and applying simple enrichments to the data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed